An introduction to Tray.io

Have you heard about Tray.io? Curious to learn more? We have just the content for you!

An introduction to Tray.io - The power of automation

Video transcript

Introduction

In this video we will explore the power of iPaaS platforms (Integration Platform as a Service).

In specific, we will focus on Tray.io and why you should consider using them.

Hello and welcome. My name is Enrico Simonetti from Naonis.

The business landscape

In today’s business landscape, you’ll likely rely on multiple specialized applications and data sources to run your operations.

However, those tools don’t always work seamlessly together, resulting in:

- manual data transfers

- repetitive tasks

- and errors.

This wastes:

- time

- money

- and resources

hindering your business goals.

Tray.io overview

That’s where iPaaS platforms like Tray.io come in.

They simplify the process of connecting systems together, allowing you to create workflows that seamlessly integrate your applications and data sources and automate processes.

With Tray.io you can automate tasks, synchronize data, trigger actions and orchestrate processes across your entire business.

The best parts are that you do not need to worry about the underlying infrastructure required to run those platforms, the ability to connect and retry to a specific system, the ability to interface with the APIs of a specific system, the logs functionality for all the runs of the integration and even the compliance and security as most of those aspects are handled for you by Tray.io.

Finally, most of the platform does not need coding to function.

Working in Tray.io

Now let’s have a look at the system itself.

When we open the application we can see that we are in the dashboard. Right now we are looking at a folder called Initial Demo. And the folder represents a project as you can see from the left hand side here.

Now within the project we can create a number of workflows. Workflows are components of an integration.

So the project is an integration and workflows are the multiple components of an integration.

Now let’s start by creating a new workflow. We create it from scratch.

Once we fill up the name, there is nothing else that needs to be completed and we can hit next.

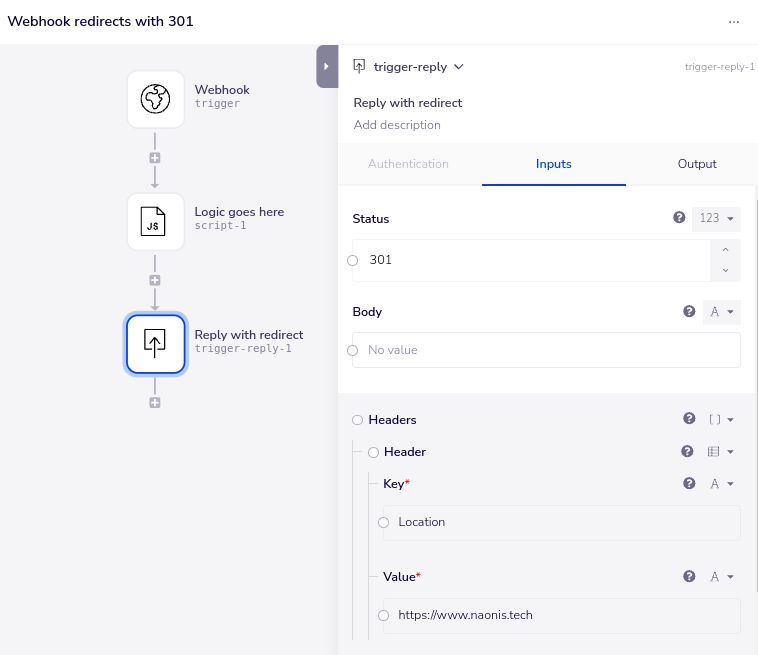

The next step is to select the trigger. The trigger is the starting point of the integration. The most common are manual when you are debugging and creating the integration. So you can trigger the integration at will. Then we have the webhook for Webhooks triggered integration and then we have the scheduler, scheduled jobs, so basically how often the integration needs to run automatically on a schedule. Then there are other forms of integration triggers as well.

Let’s start with manual in our case because we are building a test example just to showcase the platform. Let’s go ahead and create the workflow.

Now we are in the build screen of the workflow and we can see that the manual trigger is the only thing that appears in the screen right now.

We have two ways to add connectors and operators into this screen. The first one is to click on search on the top left, or we can click on the plus button just below the current trigger. So let’s go ahead and click on it.

Now we need to search for the operation we want, for the connector that we want.

In this case, we’re going to use a sample connector to generate some random data for us.

Now we can try to run the workflow right now, and see what happens. So to run the workflow we can just click on the run workflow at the bottom of the page.

As I said before, this is the build part of the functionality. But if we click on the second part up here the log section, we can see the actual execution that happened on this workflow.

As it was a new workflow, we can see that the only execution we have available. Is the one we just triggered, and once we click on it, we can see that there are two steps.

They are called execution steps that executed each of the steps present into the integration. If we click on the first one, we can see that it’s the triggering time. And then if we click on the second execution step, we can see that it didn’t have any inputs but it has some output.

As I mentioned, this is the data generation example step which generates a number of customers for us which are always the same. If I want to see this screen bigger, I can just click on expand so we can see that we have a number of fake customers created for us. OK, we can go ahead and close this window and also go back in the build section.

So we understand that based on what we’ve just seen in the log section that the CRM training step creates a number of customers for us. So once we have a list of customers, what we need to do normally is to loop through each one of them to do something with the data that we received.

So let’s go ahead and create a loop by using the loop connector. And there is a loop collection step. If we look at the right panel of the loop collection, we can see that we can select the operator for this specific connector. And we have three options, loop forever, loop list and loop object and we want to leave it as loop list.

Now we go back to the option, we can see that we need to specify a list to loop through.

Now the simplest way to select the list is to click on this icon and then drag and drop to the item that we want to get data from. Once we leave the mouse, it will show us the output of that specific step and we can click on the customers list. So that populates for us already all the properties.

Now let’s give it a go once more and run this integration to see what happens now. What we expect to happen is the same thing as before. We get a log execution and we can have a look at it right now. We click on the logo execution and we see many more steps than before.

Before we just had two. The first one is the trigger, the second one was the data generation which we can expand the output of and it is exactly the same as before. And then we have a number of loop executions.

Now if we click on each one of them, we can see. Expanding the output that we have one record at the time.

In this case we have the first record. And then the second one. And so on until the last one.

Mapping and transformation

Now what we want to do is go back to the build section and do something with that data, one by one.

So we click on this step and we say that we want to use the mapper. The mapper allows us to transform the data. What do we need to do? Well, we want to again select the input to be the current looped on record.

And then for the mapping we want to add some mapping but we need to know exactly what we are doing here.

So what we can do, is look at previews log executions.

Go back to the debugging section, pick one of those records and see what the format is. And now on the right hand side we can write the same source field.

So in this case I want to change for example the first name field to be first underscore name and that’s what I’m going to do. I’m going to paste first name as it was passed from the data and let’s pretend that our destination system accept the field in a different format, so it does call it first_name. We can add one more. Last name. And we call it last_name.

And let’s stop at that for now.

I believe that’s all we need and now we can run the workflow again.

It takes a few seconds to appear the third execution and we can see that in this case something went wrong. So let’s see what happened. This shows us that when the integration ran successfully, we can see that the step is green and every step inside the actual execution is also green.

Now the execution is red because something has failed and also within the execution we can see which step is red. Now if we click on it, we can see that the data was passed correctly in the input and we can expand that to see it further. And then we can see that something went wrong on the output.

So let’s see what happened. These keys do not exist in input, so perhaps something went wrong in here. For some reason this required field is now empty, so let’s type it in once again.

Let’s confirm that everything is correct now. First name, the first underscore name, last name to last underscore name and let’s try once again.

OK, the execution worked fine this time. Let’s give it a second to show us the steps and we can see that.

The loop ran also the mapper each of the times of the execution and we can look inside the actual data and see what the input was, in this case first name

Tony, last name, Stark and if we look at the output of it. We can see that has being transformed first underscore name and last name.

Now we can modify also total revenue and region code so that we can show how the full data mapping from one system to another might work.

Let’s make sure we don’t misspell it and we do total revenue.

I call it total revenue. And then we add one more, Region code and we call it Region.

Perfect. Let’s try to run it again and see that everything worked once again.

As expected, as soon as the integration runs manually, there we go, we have the execution, we click on it, we have all the steps, we click on one of the data mapper and we can see that the output is as expected, being changed with the mappings that we gave it.

Fantastic.

Data storage and retrieval

Now we want to do something else. Let’s go back in build and we want to store that information temporarily into a storage functionality.

We select append to list and now we have to write which one is the key, the storage variable that is going to house our list. Customer list. OK, so that’s going to be our variable and we are going to copy that because we want to retrieve it later.

So let’s copy that and the value. For the value, we want to show you something different. We want to instead of typing something in here. It’s not a fixed value, we want to get it from the data mapper. So the easiest way to do that. Is to click on this icon here and select JSON Path.

JSON Path is a way to refer to another step by selecting the right step name. So what we want to do is write the dollar and then a dot. And then the word “steps”. And on the dot and then we can see that this step is called data-mapper-1. So we type that: data-mapper-1 And we use that. OK!

And we also want the system to create that customer list variable if it doesn’t exist. So on this option we say yes, please go ahead and create it for us if it doesn’t exist. Now let’s do one more thing here. Let’s add outside of the loop, so we expect it to run only once, other data storage.

To retrieve all the customers that have been stored mapped 1 by 1. In this case we select the operation get value from the current run and the key is going to be customer list.

Now we should be good to go. So let’s run the workflow one more time. If there are no errors in what we just did and if we look at the beginning, we can scroll up and down. If we look at the beginning, we can actually see that the first run we mapped something and then we added it to the storage and the same thing for the second one, we run the second record.

We mapped correctly the second record and then we stored it, we added it to the list that we have, and at the end, we have this operation here that retrieves the full list stored into the customer list key, and if we expand the output we can see that we have all the records stored in there with the new mapping.

So that allows us to process a list of records that was generated in this case for us, but it could be coming from a different system.

Then loop through each one of the records, map every single record and add it to a storage and then in the end retrieve it to perhaps send it to another system in bulk.

Otherwise, we could have added an operation on each record. Just here and let’s go back to the build functionality. If I instead of the data storage, the first data storage, we used an operation to push the record to a CSV or push it into another system, for example a marketing automation software or a CRM, we could have done so.

The alternative is to basically append every single record to a list and then process them all in one go if we have the possibility of having a bulk API to receive records from the other end.

Now you are at least aware of the main functionalities and terminologies of Tray.io and how to potentially put together a workflow, debug it and iterate your automations and integrations.

Our Tray.io feature highlights

Now let’s look at some feature highlights. If I had to narrow it down to the top three selling points of Tray.io my three choices would be:

Intuitive

I found Tray.io extremely intuitive to get started with and easy to use. Not only that, but also it allows you to iterate quickly on what you build.

Execution log and search

Tray.io offers a comprehensive debugging and logging mechanism that lets you look at every single execution step, what are the inputs, the outputs, and you are also able to complete simple and even complex searches within those steps to uncover specific data flowing into the integration or why it didn’t, for example. This capability and especially the search, got me out of troubles more than once in the past.

Flexibility of code execution

Tray has a complete and powerful JavaScript and Node JS connector that let’s you build complex payloads or transformations with some code, providing maximum flexibility in just a single execution step.

Bonus point, we have a tool that I will make available in the description that lets you code outside of Tray.io so that you can test your code, potentially even in an automated way, version the code and make sure that the pesky bugs stay as far as possible from your custom code.

This is my personal list of the top three selling points of tray.io.

Conclusions

Let’s recap what we covered on this video. We covered some basics about iPaaS integration Platform as a service and why they are good tools to use within your business.

We covered Tray.io specific terminology. We also built, debugged and iterated on a workflow on tray.io to showcase the full process of evolution of a workflow and we also outlined our top three selling points of Tray.io.

That’s all for this video.

Thank you for watching and feel free to reach out on https://www.naonis.tech if you have any questions, comments, or if you would like our help building integrations on Tray.io.

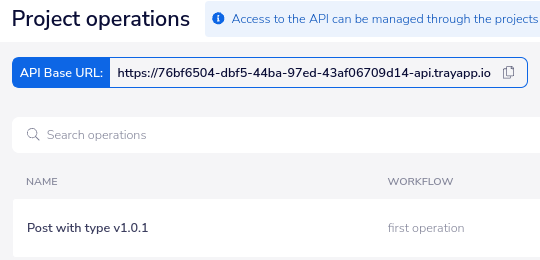

Local JavaScript coding with Tray.io

As mentioned during the video, here is our bonus Tray.io offline scripting tool, to help you build faster, more tested and more robust integrations that contain custom complex scripts.

Do you want to know more about Tray.io and automation?

Reach out to us about Tray.io for an initial conversation. We will talk about your current pain points and how automation and integration can be beneficial to increase efficiency within your business.

Introduction to Tray.io - The power of automation - FAQ

What is Tray.io and why should I consider using it?

Tray.io is an iPaaS (Integration Platform as a Service) that simplifies connecting and automating various systems in your business.

It helps streamline workflows, automate tasks, synchronize data, trigger actions, and orchestrate processes across your entire business.

The platform eliminates the need to worry about underlying infrastructure, API interfacing, and provides logs for integration runs.

Importantly, most functionalities on Tray.io do not require coding, potentially helping empower more people to create their automations.

How does Tray.io work?

Tray.io operates on the concept of projects and workflows. A project represents an integration, while workflows are the components of an integration.

Users can create workflows, define triggers (such as manual, webhook, or scheduled), and add connectors and operators to build their integrations. The platform supports a visual build interface and provides comprehensive logs for debugging.

What are the key features of Tray.io?

Tray.io offers an intuitive interface for quick iteration, an execution log and search functionality for debugging, and flexibility of code execution through a powerful JavaScript and Node JS connector.

The platform allows users to build, debug, and iterate workflows seamlessly.

Additionally, we at Naonis have helped the community with an offline scripting tool for local JavaScript coding and testing.

How can Tray.io help in data mapping and transformation?

Tray.io simplifies data mapping and transformation through its Data Mapper functionality.

Users can define mappings by referring to input data from previous steps, making it easy to transform data according to specific requirements.

The visual interface allows for the creation of mappings, enabling effective data processing within workflows.

What are the top three selling points of Tray.io?

Intuitive: Tray.io is extremely intuitive and easy to use, facilitating a quick learning curve.

Execution Log and Search: The platform offers a comprehensive debugging and logging mechanism, allowing users to scrutinize every execution step and conduct searches within the logs.

Flexibility of Code Execution: Tray.io provides a powerful JavaScript and Node JS connector, offering flexibility for users to incorporate code in their workflows, enhancing customization and complex transformations.

How can I learn more about Tray.io and automation?

If you are interested in Tray.io and want to explore its capabilities further, feel free to reach out!

We can initiate an initial conversation to understand your specific business needs and discuss how automation and integration can benefit your organization.

Might also be interesting

Integrate and automate: Tray.ai

Tray.ai is a dynamic, secure and scalable no-code and low-code platform designed for integration, automation, and data orchestration, empowering mid to enterprise businesses to create seamless business applications, streamline workflows, connect systems, and eliminate inefficiencies.

With our certified expertise and over 15 years of experience in CRM and process optimization, we help businesses harness the full capabilities of Tray.ai to create seamless, efficient, and robust workflows that drive measurable business outcomes.

Tray.ai (Tray.io) implementation and consulting

Improve your business efficiency with our Tray.ai implementation and consulting services. Our expert team ensures seamless integration and business process automation solutions with Tray.ai, optimizing efficiency and maximizing outcomes.

Discover our tailored solutions for effective workflow automation and system integration of your current systems.

All trademarks mentioned on this page are the property of their respective owners. The mention of any company, product or service does not imply their endorsement.